March 3rd, 2026

Criteria-based assessment

Evaluate each submission against assessment criteria shared across all questions.

Until now, Evalmee calculated a single overall grade per submission. Now, you can define cross-cutting assessment criteria at the exam level, and each question will be graded against those criteria. The result: in addition to the overall grade, each examinee receives a grade per assessment criterion.

This is the missing piece to easily implement a competency-based approach, an RNCP assessment framework, or simply provide richer feedback to your examinees. Whether you call them criteria, competencies, learning outcomes, or skills: the principle is the same: you break down your assessment and get a grade per criterion across the entire exam.

How it works:

Define your assessment criteria at the exam level (e.g., Methodological rigor, Quality of analysis, Relevance of recommendations, or directly your competencies)

Configure each question against these criteria: choose which criteria apply, write marking instructions tailored to the question, and assign points per criterion

Trigger our AI grading agent: based on your rubrics and instructions, our grading agent suggests grades and feedback criterion by criterion, question by question. The AI doesn't grade "on its own", it applies your criteria, your instructions, your rubric. The more precise your instructions, the more accurate the suggestions, as if you were delegating grading to colleagues.

You have the final say: review, adjust if needed, then approve. Nothing is validated without your sign-off.

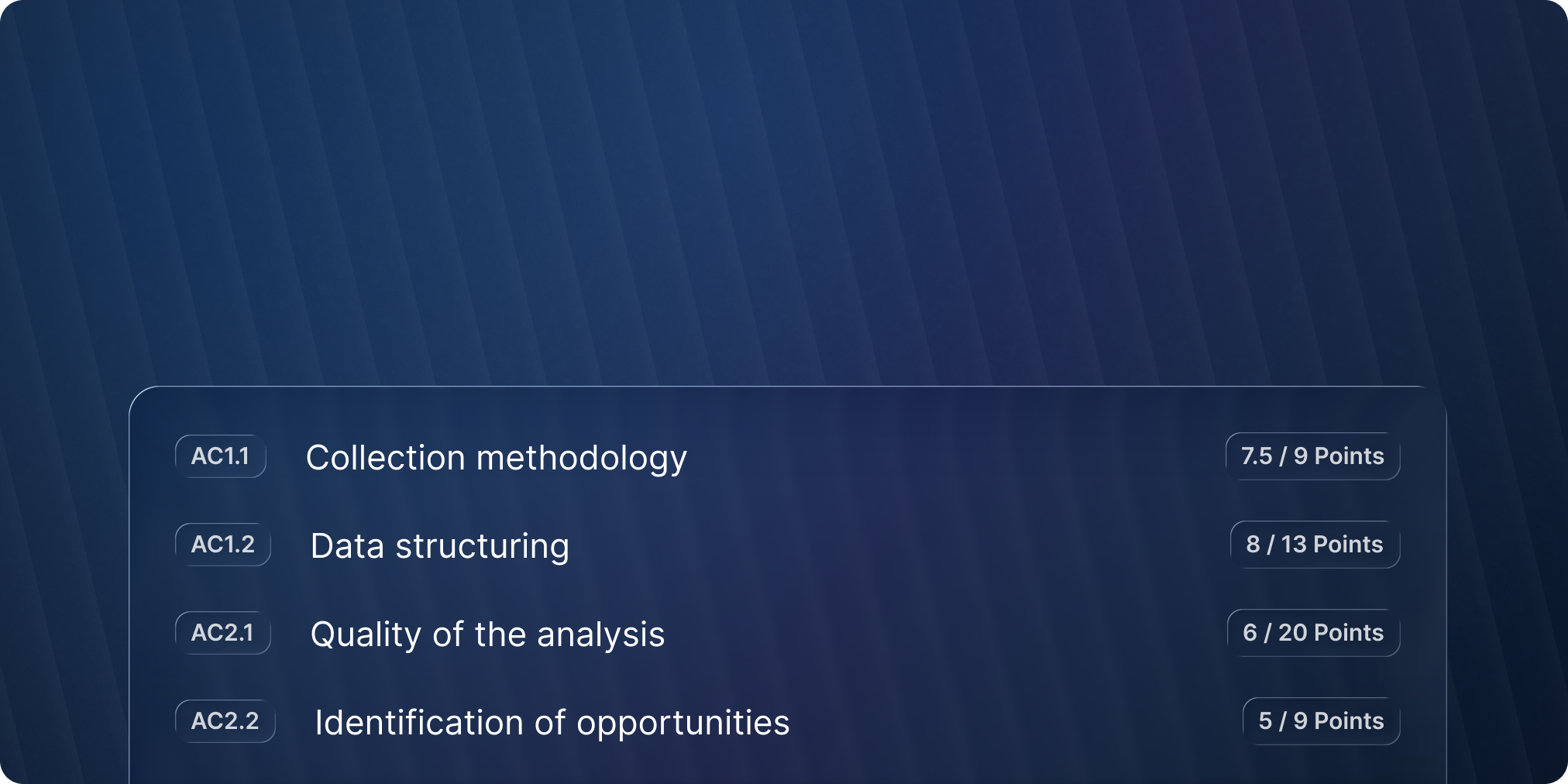

View the results: each submission displays the overall grade and the breakdown by criterion

In practice

Competency-based assessment: define one criterion per competency and automatically get a competency grade across all questions. Ideal for evaluating proficiency levels (Achieved / Partially achieved / Not achieved)

RNCP certification: directly produce the per-criterion grades expected by the certification panel, aligned with your assessment framework. A considerable time saver and more precise decision support for your panels.

Enhanced pedagogical feedback: give each examinee detailed feedback per criterion, not just an overall grade. Your examinees know exactly what to improve, and your teaching teams can identify recurring weaknesses across a cohort.

👉 Learn more:

MCQ rubric modification after the exam

The most requested feature is here. Until now, a poorly calibrated MCQ rubric meant Excel exports and manual recalculations to fix things.

That's over: modify your MCQ rubric at any time, even after examinees have taken the exam. Penalties too harsh, crushing everyone's grades? Rubric too generous, not reflecting the actual level? Adjust the scoring rules, and all MCQs in the exam are re-graded and grades recalculated instantly. To finally reflect the rubric you had in mind.

Exam duplication with sessions

Duplicate an exam with its sessions in one click. If you duplicate with examinees, their respective groups are now preserved as well.

✨ Other Improvements

Email invitations: you can now set or edit the email template when sending invitations from the "examinees" page.

Examinee tracking: improved display of "All" / "Not started" / "In progress" / "Completed" filters

Fraud detection: browser zoom is no longer flagged as window resizing

🐞 Bug Fixes

Exam editor: the points editor now updates immediately

Criterion-based grading: fixed a bug that could prevent assigning 0 points to a criterion in some cases